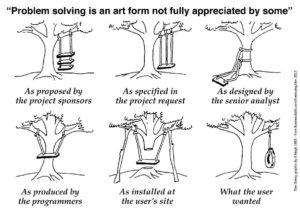

Data science solutions, in the form of a commercial product or custom-built, are unlike other types of software. The classic problem-solution gap, illustrated in the well-known cartoon below, can be hugely exacerbated in data science by the fact that, in the end, the customer may not realize they got something other than what they asked for. Instead, they may have the false impression that the solution is working quite well.

Customers have learned to objectively evaluate most aspects of software, such as performance, usability, or functional fit. What they have not learned yet is how to evaluate the quality of mathematical modeling in data science solutions. The reason for this is straightforward: most customers lack the expertise required to be able to see that the solution is not optimally, or not at all, suited to their needs.

In some situations, there are objective metrics that help. If a solution outperforms the competition in relation to a desired metric, such as the precision of a prediction model, then the customer rightly has a reason to be satisfied. Even so, it is not always clear which metric should be used in a given situation nor being better than competition is a guarantee of an optimal solution. Moreover, much of data analytics is not predictive or prescriptive, but rather descriptive, and for this type of analysis objective metrics are rare.

For example, a customer may look for a solution that ranks some indicators by the trend of their temporal evolution. Yet, a trend is not a formally defined mathematical concept. As a result, it is open to multiple interpretations and mathematical models. How is the customer to judge which one is the most relevant? The same applies to many other common data

science problems such as integration of analysis across multiple data sources or aggregated ranking across multiple criteria.

The reader may object that it is up to the data scientists to determine the optimal solution for their customers. The problem with this is that data scientists, in their turn, usually lack sufficient domain expertise to deduce what the customer’s needs really are. The conceptual gap between data science and its users is usually simply too large to bridge in the timeframe of today’s fast-paced work environment. Moreover, customers must have an independent way of assessing the quality of the proposed solutions – just think of the difference between trusting a manufacturer’s claim versus a validation by an independent consumer agency.

The frequently proposed way out of this deadlock is to make use of the so-called Data or Analytics Translators. The idea is that one can find or train people who know just enough about both the data science and the business side of things to help bridge the gap between them. While such people can certainly help, they can’t ultimately resolve the underlying

issue. This is because they are not meant to be experts in either one of the domains, and therefore, cannot effectively challenge the thinking on either side of the divide.

People with strong expertise in both data science and business are quite rare, but they do exist. They may not be up for hire full-time, but may well be available for consulting projects, even despite being employed elsewhere. Hiring such experts during the phase of project conception or solution acquisition is the best strategy to minimize the risk of failure or sub-optimal results. Given the strategic impact and budgets associated with data science projects, this is absolutely worth its while.